Fuller at the 92Y

I’m very excited to announce that I’ll be giving a two-part online talk on the life and work of Buckminster Fuller in partnership with the 92nd Street Y on May 13 and 20. It’s hosted through Roundtable, their virtual education platform, so anyone can attend. I expect that it will be the most comprehensive talk that I’ll ever give on Fuller—I plan to cover every important episode from my biography Inventor of the Future—and I’m hoping to attract a receptive audience. If you have a chance, I’d appreciate it very much if you could spread the word—and I hope to see some of you there!

Back to the Future

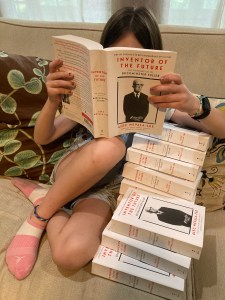

It’s hard to believe, but the paperback edition of Inventor of the Future: The Visionary Life of Buckminster Fuller is finally in stores today. As far as I’m concerned, this is the definitive version of this biography—it incorporates a number of small fixes and revisions—and it marks the culmination of a journey that started more than five years ago. It also feels like a milestone in an eventful writing year that has included a couple of pieces in the New York Times Book Review, an interview with the historian Richard Rhodes on Christopher Nolan’s Oppenheimer for The Atlantic online, and the usual bits and bobs elsewhere. Most of all, I’ve been busy with my upcoming biography of the Nobel Prize-winning physicist Luis W. Alvarez, which is scheduled to be published by W.W. Norton sometime in 2025. (Oppenheimer fans with sharp eyes and good memories will recall Alvarez as the youthful scientist in Lawrence’s lab, played by Alex Wolff, who shows Oppenheimer the news article announcing that the atom has been split. And believe me, he went on to do a hell of a lot more. I can’t wait to tell you about it.)

Ringing in the new

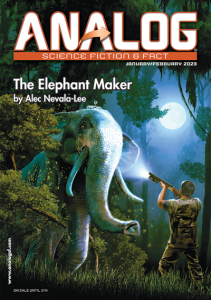

By any measure, I had a pretty productive year as a writer. My book Inventor of the Future: The Visionary Life of Buckminster Fuller was released after more than three years of work, and the reception so far has been very encouraging—it was named a New York Times Book Review Editors’ Choice, an Economist best book of the year, and, wildest of all, one of Esquire‘s fifty best biographies of all time. (My own vote would go to David Thomson’s Rosebud, his fantastically provocative biography of Orson Welles.) On the nonfiction front, I rounded out the year with pieces in Slate (on the misleading claim that Fuller somehow anticipated the principles of cryptocurrency) and the New York Times (on the remarkable British writer R.C. Sherriff and his wonderful novel The Hopkins Manuscript). Best of all, the latest issue of Analog features my 36,000-word novella “The Elephant Maker,” a revised and updated version of an unpublished novel that I started writing in my twenties. Seeing it in print, along with Inventor of the Future, feels like the end of an era for me, and I’m not sure what the next chapter will bring. But once I know, you’ll hear about it here first.

A Geodesic Life

After three years of work—and more than a few twists and turns—my latest book, Inventor of the Future: The Visionary Life of Buckminster Fuller, is finally here. I think it’s the best thing that I’ve ever done, or at least the one book that I’m proudest to have written. After last week’s writeup in The Economist, a nice review ran this morning in the New York Times, which is a dream come true, and you can check out excerpts today at Fast Company and Slate. (At least one more should be running this weekend in The Daily Beast.) If you want to hear more about it from me, I’m doing a virtual event today sponsored by the Buckminster Fuller Institute, and on Saturday August 13, I’ll be holding a discussion at the Oak Park Public Library with Sarah Holian of the Frank Lloyd Wright Trust, which will be also be available to view online. There’s a lot more to say here, and I expect to keep talking about Fuller for the rest of my life, but for now, I’m just delighted and relieved to see it out in the world at last.

Running it up the flagpole

Two months ago, I was browsing Reddit when I stumbled across a post that said: “TIL that the current American Flag design originated as a school project from Robert G Heft, who received a B- for lack of originality, yet was promised an A if he successfully got it selected as the national flag. The design was later chosen by President Eisenhower for the national flag of the US.” After I read the submission, which received more than 21,000 upvotes, I was skeptical enough of the story to dig deeper. The result is my article “False Flag,” which appeared today in Slate. I won’t spoil it here, but rest assured that it’s a wild ride, complete with excursions into newspaper archives, government documents, and the world of vexillology, or flag studies. It’s probably my most surprising discovery in a lifetime of looking into unlikely subjects, and I have a hunch that there might be even more to come. I hope you’ll check it out.

Inventing the future

I realize that I’m well overdue for an update on my new book, Inventor of the Future: The Visionary Life of Buckminster Fuller, which is scheduled to be published by Dey Street Books / HarperCollins on August 2. Yesterday, I delivered my revisions to the copy edit, so I’ve essentially reached the end of a process that has lasted three long and challenging years. The result, I think, is the best thing I’ve ever done. It’s a big book—over six hundred pages including the back matter and index—but Fuller more than justifies it, and I hope that it will appeal to both his existing admirers and readers who are encountering him for the first time. (Even if you’re an obsessive Fuller fan, I can guarantee that there’s a lot here that you haven’t seen before.) I’m also delighted by the cover, which features a remarkable portrait of Fuller by Richard Avedon that has rarely been reproduced elsewhere. Obviously, I’ll have a lot more to say about this over the next few months, so check back soon for more!

Allegra Fuller Snyder (1927-2021)

I was saddened to learn that Allegra Fuller Snyder passed away last Sunday at the age of 93. Allegra was a remarkable person in her own right—she was a professor emerita at UCLA and a pioneer in the field of dance ethnology—but I got to know her through the life and work of her father, Buckminster Fuller, whose biography I’ve been writing for the last three years. She navigated the challenges of that unusual legacy with intelligence and compassion, and I owe a great deal to her kindness and support. My thoughts are with her loved ones and friends.

The gigantic problem

I don’t really have other interests. My interest is in solving the problems presented by writing a book. That’s what stops my brain spinning like a car wheel in the snow, obsessing about nothing. Some people do crossword puzzles to satisfy their need to keep the mind engaged. For me, the absolutely demanding mental test is the desire to get the work right. The crude cliché is that the writer is solving the problem of his life in his books. Not at all. What he’s doing is taking something that interests him in life and then solving the problem of the book—which is, How do you write about this? The engagement is with the problem that the book raises, not with the problems you borrow from living. Those aren’t solved, they are forgotten in the gigantic problem of finding a way of writing about them.

—Philip Roth, quoted in Risky Business by Al Alvarez

Ben Bova (1932-2020)

Earlier this month, the legendary author and editor Ben Bova passed away in Florida. Bova famously took over Analog after the death of John W. Campbell, and he was the crucial figure in a transition that managed to honor the magazine’s tradition of hard science fiction while pushing into stranger, less predictable territory. By publishing stories like “The Gold at the Starbow’s End” by Frederik Pohl, “Hero” by Joe Haldeman, and “Ender’s Game” by Orson Scott Card, as well as important work by such authors as George R.R. Martin and Vonda N. McIntyre, Bova did more than anyone else to usher the Campbellian mode into the new era, and the result still embodies the genre’s possibilities for countless fans. I never met Bova in person, and I only had the chance to interview him once over the phone, but I was pleased to help out very slightly with his obituary in the New York Times. It’s a tribute that he richly deserved, and I hope that his example will endure well into the next generation of editors and writers.

Quote of the Day

When the system doesn’t respond, when it doesn’t accept what you’re doing—and most of the time it won’t—you have a chance to become self-reliant and create your own system. There will always be periods of solitude and loneliness, but you must have the courage to follow your own path. Cleverness on the terrain is the most important trait of a filmmaker.

Always take the initiative. There is nothing wrong with spending a night in a jail cell if it means getting the shot you need. Send out all your dogs and one might return with prey. Beware of the cliché. Never wallow in your troubles; despair must be kept private and brief. Learn to live with your mistakes. Study the law and scrutinize contracts. Expand your knowledge and understanding of music and literature, old and modern. Keep your eyes open. That roll of unexposed celluloid you have in your hand might be the last in existence, so do something impressive with it. There is never an excuse not to finish a film. Carry bolt cutters everywhere.

Thwart institutional cowardice. Ask for forgiveness, not permission. Take your fate into your own hands. Don’t preach on deaf ears. Learn to read the inner essence of a landscape. Ignite the fire within and explore unknown territory. Walk straight ahead, never detour. Learn on the job. Maneuver and mislead, but always deliver. Don’t be fearful of rejection. Develop your own voice. Day one is the point of no return. Know how to act alone and in a group. Guard your time carefully. A badge of honor is to fail a film theory class. Chance is the lifeblood of cinema. Guerrilla tactics are best. Take revenge if need be. Get used to the bear behind you. Form clandestine rogue cells everywhere.

The Singularity is Near

My latest science fiction story, “Singularity Day,” is currently featured on Curious Fictions, an online publishing platform that allows authors to share their work directly with readers. It was originally written as a submission to the “Op-Eds From the Future” series at the New York Times, which was sadly discontinued earlier this year, but I liked how it turned out—it’s one of my few successful attempts at a short short story—and I decided to release it in this format as a little experiment. It’s a very quick read, and it’s free, so I hope you’ll take a look!

The Return of “Retention”

Back in 2017, the audio anthology series The Outer Reach released an episode based on my dystopian two-person play “Retention,” which was performed by Aparna Nancherla and Echo Kellum. It’s one of my personal favorites of all my work—I talk about its origins here—and I’ve been delighted to see it come back over the last few months in no fewer than three different forms. The original recording, which was behind a paywall for years, is now available to stream for free through the network Maximum Fun. A wonderful new rendition narrated by Jonathan Todd Ross and Catherine Ho is included in my audio short story collection Syndromes. Perhaps best of all, the print adaptation appears in the July/August 2020 issue of Analog Science Fiction & Fact, which is on sale now. Because I’m hard at work on my current book project, this will probably be my last story in Analog for a while, and I can’t think of a better way to close out my recent run than with “Retention.” I’m very proud of all three versions, which interpret the same underlying text with intriguingly varied results, and I hope you’ll check at least one of them out.

Listening to Syndromes

I’m pleased beyond words to announce that my audio short fiction collection Syndromes, which includes all thirteen of my stories from Analog Science Fiction and Fact, has just been released by Recorded Books. (It was originally scheduled to drop in June, but in light of recent developments, it became one of a handful of titles to come out before everything shut down on that end. I’m glad that it managed to appear just under the wire, and I’m especially delighted by the dazzling cover art by Will Lee.) The wonderful narrators Jonathan Todd Ross and Catherine Ho trade reading duties on “Ernesto,” “The Spires,” “The Whale God,” “The Last Resort,” “Kawataro,” “Cryptids,” “The Boneless One,” “Inversus,” “Stonebrood,” “The Voices,” “The Proving Ground,” and “At the Fall,” before joining forces at the end for a new version of my audio play “Retention,” which strikes me as the standout track. Every story has been revised to fit into a single interconnected timeline, which stretches from 1937 through the near future, and even if you’ve read some of them before, you’ll discover a few new surprises. You can purchase it at Amazon or stream it through Audible or Libro.fm, so I hope that some of you will check it out—and let me know what you think!

The books and the wall

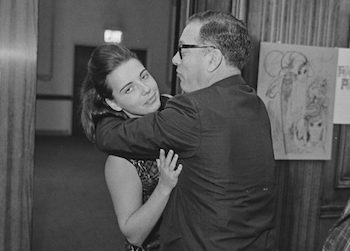

Yesterday, I published an essay titled “Asimov’s Empire, Asimov’s Wall” on the website Public Books, in which I discuss Isaac Asimov’s history of groping and engaging in other forms of unwanted touching with women at conventions, in the workplace, and in private over the course of many decades. It’s a piece that I’ve had in mind for a long time, and I’ve come to think of it as a lost chapter of Astounding, which I might well have included in the book if I had delivered the final draft a few months later than I actually did. (I’m also very glad that the article includes the image reproduced above, which I found after going through thousands of photos in the Jay Kay Klein archive.) The response online so far has been overwhelming, including numerous firsthand accounts of his behavior, and I hope it leads to more stories about Asimov, as well as others. There’s a lot that I deliberately didn’t cover here, and it deserves to be taken further in the right hands.

Shoji Sadao (1926-2019)

The architect Shoji Sadao—who worked closely with Buckminster Fuller and the sculptor Isamu Noguchi—passed away earlier this month in Tokyo. I’m briefly quoted in Sadao’s obituary in the New York Times, which draws attention to his largely unsung role in the careers of these two brilliant but demanding men. My contact with Sadao was limited to a few emails and one short phone conversation intended to set up an interview that I’m sorry will never happen, but I’ll be discussing his legacy at length in my upcoming biography of Fuller, and you’ll be hearing a lot more about him here.

Onward and upward

Next week, I’ll be attending the 77th World Science Fiction Convention in Dublin, Ireland, which promises to be a lot of fun. Here’s my schedule as it currently stands:

- Thursday August 15, 3pm—”Current Politics Reflected in SFF”—Dr. Harvey O’Brien (M), Susan Connolly, Dr Douglas Van Belle, Alec Nevala-Lee—”SFF is probably the genre that best mirrors present day society. If we examine SFF in both visual media and books, what can we learn about current politics playing out? What might future generations surmise about us?”

- Friday August 16, 11:30am—”Continuing Relevance of Older SF”—Sue Burke (M), Alec Nevala-Lee, Aliza Ben Moha, Robert Silverberg, Joe Haldeman—”We are in a new millennium, a literal Brave New World. Surely much of the fiction of the 20th century no longer holds relevance? The panel will discuss the fiction of the past and how it can still be relevant in the 21st century. What lessons from older authors – such as Orwell, Asimov, Butler, Delany, Kafka, and Atwood – can we apply to our app-loaded, social media-driven age?”

- Friday August 16, 2019, 5pm—”Comparable Futurist Movements”—Alec Nevala-Lee (M), Gillian Polack, Jeanine Tullos Hennig, Shweta Taneja—”How influenced by Afrofuturism are other world futurist movements such as Sinofuturism, Nippofuturism and Gulf futurism? Do they consider themselves a part of the same futurist tradition, or separate? The panel will discuss visions of the future from world cultures, how they are influenced by the root cultures they draw from, and how (if at all) they relate to Afrofuturism.”

- Saturday August 17, 2019, 2pm—Autographing

- Monday August 19, 2019, 10am—Kaffeeklatsch

- Monday August 19, 2019, 12:30pm—Reading

I might as well also mention that Astounding is up for the Hugo Award for Best Related Work—although the rest of the ballot is extremely formidable—and that it recently came out in paperback. (This new edition is virtually identical to the hardcover, but I took the opportunity to make a few small fixes and tweaks, and as far as I’m concerned, this is the definitive version.) And if you haven’t done so already, please check out the first three episodes of the wonderful Washington Post podcast Moonrise, which heavily draws on material from the book. Hope to see some of you soon!

Burrowing into The Tunnel

Last fall, it occurred to me that someone should write an essay on the parallels between the novel The Tunnel by William H. Gass, which was published in 1995, and the contemporary situation in America. Since nobody else seemed to be doing it, I figured that it might as well be me, although it was a daunting project even to contemplate—Gass’s novel is over six hundred pages long and famously impenetrable, and I knew that doing it justice would take at least three weeks of work. Yet it seemed like something that had to exist, so I wrote it up at the end of last year. For various reasons, it took a long time to see print, but it’s finally out now in the New York Times Book Review. It isn’t the kind of thing that I normally do, but it felt like a necessary piece, and I’m pretty proud of how it turned out. And if the intervening seven months don’t seem to have dated it at all, it only puts me in mind of what the radio host on The Simpsons once said about the DJ 3000 computer: “How does it keep up with the news like that?”

Notes from all over

It’s been a while since I last posted, so I thought I’d quickly run through a few upcoming items. On Saturday June 15, the Gene Siskel Film Center in Chicago will be showing Arwen Curry’s acclaimed new documentary Worlds of Ursula K. Le Guin. After the screening, I’ll be taking part in a discussion panel with Mary Anne Mohanraj and Madhu Dubey to discuss the legacy of Le Guin, whose work increasingly seems to me like the culmination of the main line of science fiction in the United States, even if she doesn’t figure prominently in Astounding. (Which, by the way, is up for a Locus Award, the results of which will be announced at the end of this month.) The next day, on June 16, I’ll be hosting a session of my writing workshop, “Writing Fiction that Sells,” at Mary Anne’s Maram Makerspace in Oak Park. People seem to like the class, which runs from 10:00-11:45 am, and it would be great to see some of you there!

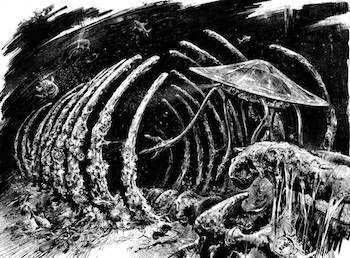

“At the Fall” and Beyond

The May/June issue of Analog Science Fiction and Fact includes my new novelette “At the Fall,” a big excerpt of which you can read now on the magazine’s official site. It’s one of my favorite stories that I’ve ever written, and I’m especially pleased by the interior illustration by Eldar Zakirov, pictured above, which you can see in greater detail here. I don’t think I’ll have the chance to write up the kind of extended account of this story’s conception that I’ve provided for other works in the past, but if you’re curious about its origins, Analog has posted a fun conversation on its blog in which I talk about it with Frank Wu, the author of “In the Absence of Instructions to the Contrary,” which appeared in the magazine a few years ago. (Our stories have a number of interesting parallels that only came to light after I wrote and submitted mine, and I think that the result is a nice case study of what happens when two writers end up independently pursuing a similar idea.) There’s also a thoughtful editorial by former Analog editor Stanley Schmidt about his relationship with John W. Campbell, inspired by a panel that we held at last year’s World Science Fiction Convention. Enjoy!